2026-04-26

The Agentic Stack Is Getting Real: DeepSeek-V4, Cloudflare, Google Code AI, and TPU 8i

A breakdown of DeepSeek-V4, Cloudflare's agentic cloud, Google's AI-generated code milestone, and TPU 8t/8i infrastructure for agentic AI.

This week had a common thread across four very different AI stories:

AI is moving from “model as chatbot” to “model as worker inside a system.”

That shift changes what matters.

The model still matters, but it is no longer enough. Agents need long context, tool execution, memory, sandboxing, identity, observability, inference economics, and a human review loop. The companies making real progress are not just releasing better demos. They are building the missing infrastructure around the model.

Here are the four updates worth paying attention to.

1. DeepSeek-V4 shows what open agent models need next

Source

Hugging Face: DeepSeek-V4DeepSeek-V4 is interesting because it is aimed at a real failure mode in agents: long-running work.

Most coding and research agents do not fail only because the base model is weak. They fail because the execution trace grows. Tool outputs pile up. The KV cache becomes expensive. The model loses state across tool calls or user follow-ups. Eventually the agent slows down, forgets important context, or needs a human to restart the workflow.

According to the Hugging Face write-up, DeepSeek-V4 targets this with:

- a 1M-token context window

- DeepSeek-V4-Pro at 1.6T total parameters with 49B active

- DeepSeek-V4-Flash at 284B total parameters with 13B active

- hybrid attention using Compressed Sparse Attention and Heavily Compressed Attention

- FP8/FP4 storage choices to reduce KV-cache pressure

- reasoning preserved across tool-call-heavy workflows

- a dedicated tool-call schema

- sandbox infrastructure for training agents against real tool environments

The important point is not simply “1M tokens.”

A large context window is capacity. It is not performance by itself. For an agent, every new tool result increases the sequence that later tokens must attend over. If the attention and KV-cache costs are too high, the model may technically support a huge context window while still being painful to run in real workflows.

DeepSeek-V4 is notable because it is trying to make long context usable for agentic tasks, not just available on a spec sheet.

What this means for local AI

For the local AI ecosystem, this is both exciting and sobering.

It is exciting because open models are starting to optimize around the shape of real agent workloads:

- code execution

- terminal sessions

- browser tasks

- retrieval

- multi-step tool use

- long context

- persistent reasoning over a task

That is exactly where local AI needs to go. The future local AI stack is not just “a chatbot on your laptop.” It is a local or hybrid agent runtime: model, memory, tools, sandbox, permissions, retrieval, logs, and task orchestration.

But the hardware reality is still brutal.

DeepSeek-V4-Pro is a 1.6T total parameter MoE model. Even with only 49B active parameters and aggressive FP4/FP8 formats, this is far beyond what most developers can run locally. DeepSeek-V4-Flash is smaller, but 284B total parameters is still nowhere near casual local deployment for most people.

Most local AI users are working with:

- 8GB VRAM

- 16GB VRAM

- 24GB VRAM

- sometimes 48GB VRAM

- Apple Silicon unified memory setups

- small home servers

That is enough for smaller quantized models, local coding assistants, RAG workflows, and narrow automation. It is not enough for the full DeepSeek-V4 long-context agent experience at serious throughput.

So the right takeaway is not “local AI caught up with frontier agent systems.”

The better takeaway:

DeepSeek-V4 points toward the architecture local AI will eventually absorb through smaller distilled models, quantization, better runtimes, hybrid local/cloud execution, and tool-aware agent frameworks.

For developers, the practical lesson is this:

The winning local AI stack will not be the biggest model you can download. It will be the system that manages context, memory, tools, sandboxing, and cost the best.

2. Cloudflare is building cloud primitives for agents

Source

Cloudflare: Agents Week 2026 wrap-upCloudflare is one of the most interesting companies to watch here because it tends to understand infrastructure shifts early.

Its Agents Week wrap-up is not just a list of AI features. It reads more like a map of what an agentic cloud needs.

Cloudflare frames agents as a new primary workload. That matters because agents are not normal web apps. They can run for longer, call tools, generate code, access internal systems, browse websites, send email, and operate across user boundaries. That means they need more than an API call to an LLM.

Some examples from Cloudflare’s launch set:

- Sandboxes give agents isolated computers with a shell, filesystem, and background processes.

- Artifacts provides Git-compatible versioned storage for code and data.

- Outbound Workers for Sandboxes add zero-trust egress controls.

- Durable Object Facets give AI-generated apps isolated SQLite databases.

- Workflows now supports higher concurrency for durable background agents.

- Cloudflare Mesh provides scoped private network access for users, nodes, agents, and Workers.

- Managed OAuth for Access helps agents authenticate without insecure service accounts.

- Cloudflare’s MCP reference architecture addresses governance and Shadow MCP detection.

- Agent Memory gives agents persistent managed memory.

- AI Search provides a retrieval primitive for agents.

- Browser Run gives agents browser access with human-in-the-loop support and session recording.

- Cloudflare Email Service gives agents a native email channel.

That is the important pattern.

The future is not one magic assistant. It is many agents running inside infrastructure with clear constraints.

An agent runtime needs:

- compute

- memory

- browser access

- search

- tool permissions

- identity

- private network access

- storage

- sandboxing

- logs

- cost controls

- deployment path

This is why I like Cloudflare’s direction. They are not just asking, “How do we add AI to the dashboard?”

They are asking a deeper infrastructure question:

What should the cloud look like when agents become normal workloads?

That is the right question.

For engineering teams, the takeaway is practical. If you are building agents, do not start with a demo-only architecture. Start with the runtime boundary:

- What can the agent access?

- Where does generated code run?

- Where does memory live?

- How are credentials scoped?

- How do you replay or inspect actions?

- How do you move a prototype into production?

- What happens when 1,000 agents run at once?

The teams that answer those questions early will ship safer agentic systems.

3. Google says 75% of new code is AI-generated, but the key word is “approved”

Source

Google: Sundar Pichai at Cloud Next 2026Google said that 75% of all new code at Google is now AI-generated and approved by engineers, up from 50% last fall.

The important word is not “generated.”

It is “approved.”

That distinction matters for software developers.

AI-generated code does not remove engineering responsibility. It moves the bottleneck. The old bottleneck was often typing and implementation speed. The new bottleneck is judgment:

- Is the design correct?

- Does the implementation match the architecture?

- Are edge cases covered?

- Is the generated code maintainable?

- Are tests meaningful?

- Does this create a migration risk?

- Does it preserve security boundaries?

- Can the team debug it six months later?

Google also described engineers shifting into more agentic workflows, including autonomous digital task forces, faster code migrations, and Antigravity being used to move from idea to native Swift prototype in days.

This is a strong signal for the direction of software engineering.

The developer job is not becoming “press button, receive code.”

It is becoming:

- scope the task

- constrain the agent

- review the output

- write or validate tests

- understand the system impact

- own production behavior

For junior developers, this raises the bar. If your main skill is syntax, AI makes that less defensible. If you understand systems, debugging, testing, tradeoffs, architecture, and product context, AI gives you more leverage.

For senior developers, this is not a threat. It is an amplifier.

But only if you stay in the loop as an engineer, not just a prompt operator.

The best AI-assisted developers will be the ones who can say:

“This code compiles, but the abstraction is wrong.”

“This migration passes tests, but it creates a rollback risk.”

“This solution works for the happy path, but violates the ownership boundary.”

“This generated code is clever, but not maintainable.”

That is where real engineering judgment shows up.

4. Google’s TPU 8t and TPU 8i show why agentic AI is an infrastructure problem

Source

Google Cloud: TPU 8t and TPU 8i technical deep diveGoogle’s new TPU 8t and TPU 8i systems are a useful signal because they separate training and inference more explicitly.

Google says the infrastructure requirements for pre-training, post-training, and real-time serving have diverged. That is exactly what agentic workloads are forcing.

TPU 8t is optimized for large-scale pre-training and embedding-heavy workloads. It uses a 3D torus network topology and scales to 9,600 chips in a single superpod. Google highlights SparseCore, native FP4, Virgo networking, TPUDirect RDMA, and TPU Direct Storage as pieces of the training path.

TPU 8i is optimized for post-training, high-concurrency reasoning, sampling, and serving. This is the more agent-specific side. Google says TPU 8i includes:

- 3x more on-chip SRAM than the previous generation

- a Collectives Acceleration Engine

- a serving-optimized Boardfly topology

- 288GB HBM capacity

- 8,601GB/s HBM bandwidth

- up to 1,152 chips in a pod

- up to 80% better inference price-performance over Ironwood for low-latency large MoE model serving

The Boardfly topology is especially interesting. Google explains that a traditional 3D torus is efficient for many dense training patterns, but can create more hops for all-to-all communication. In reasoning and MoE workloads, tokens may need to route across chips, so network diameter affects tail latency. Boardfly reduces the network diameter for a 1,024-chip pod from 16 hops to seven.

This is the agentic-era infrastructure story in one sentence:

Agents are inference monsters.

They do not just answer once. They reason, call tools, wait, continue, branch, retry, search, execute, and often run in parallel. That makes latency, memory bandwidth, synchronization, batching, and cost per completed task first-class product concerns.

For product teams, the model is no longer the whole product.

The system around the model is the product:

- model

- inference runtime

- memory

- context management

- tool execution

- networking

- security

- observability

- cost controls

That is why the TPU 8t/8i split matters. It reflects a larger industry shift from “train bigger models” to “operate intelligent systems efficiently.”

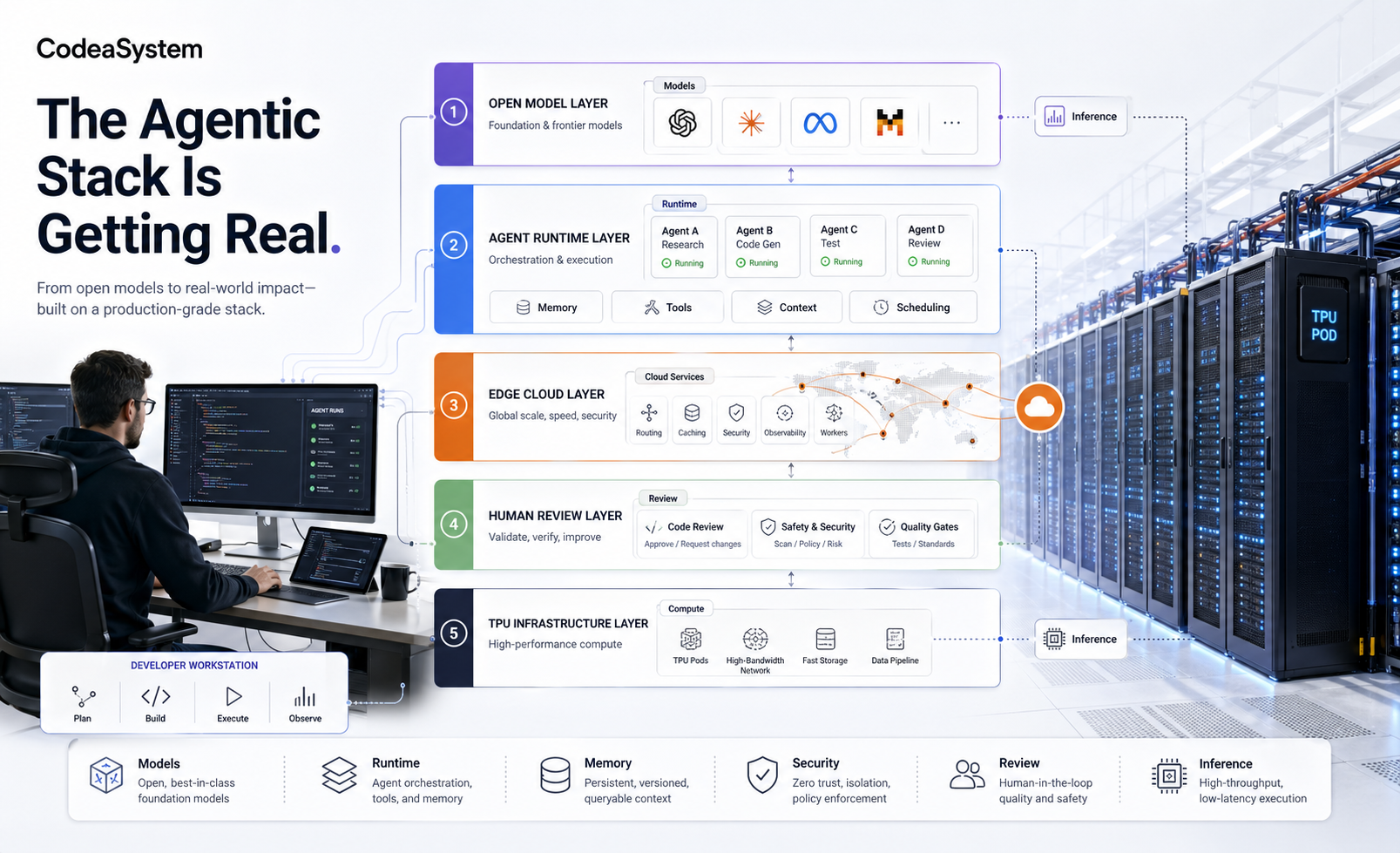

The larger pattern: agents need a stack

These four stories are connected.

DeepSeek-V4 shows what model architecture needs to handle long-running tool use.

Cloudflare shows what cloud infrastructure needs to safely run agents.

Google’s internal coding milestone shows what developer workflows look like when AI output becomes normal.

Google’s TPU 8i shows what serving infrastructure needs when millions of reasoning agents run concurrently.

Put together, the agentic stack looks like this:

Model layer

Long context, tool use, reasoning, efficient KV cache, structured tool calls.Runtime layer

Sandboxes, background execution, browser access, filesystem, terminal, workflow engine.Memory and retrieval layer

Persistent memory, search, embeddings, context ranking, state management.Security layer

OAuth, scoped permissions, egress control, private network access, non-human identity.Developer workflow layer

AI-generated code, review, tests, migrations, production ownership.Infrastructure layer

Training chips, inference chips, low-latency networking, memory bandwidth, cost per task.

This is why “which model is best?” is becoming an incomplete question.

The better question is:

Can the system run useful work safely, cheaply, and repeatedly?

That is the real engineering bar for agentic AI.

Practical takeaways for builders

If you are a developer, founder, or engineering lead, here is the useful version:

Do not design agents like chatbots. Design them like constrained workers.

Give them:

- a clear task boundary

- limited tools

- scoped credentials

- memory with expiration rules

- logs and replay

- cost limits

- tests around important actions

- human approval for risky steps

Do not assume local AI is ready to replace cloud AI for frontier agent workloads. Local AI is powerful and improving, but full DeepSeek-V4-class workflows are still far beyond most personal systems. Use local models where they fit: privacy-sensitive tasks, narrow coding helpers, retrieval, offline automation, and small agents. Use cloud or hybrid setups when the workload needs frontier reasoning, huge context, or high throughput.

Do not treat AI-generated code as free engineering. It still needs review, testing, architecture, ownership, and production discipline.

Do not ignore inference economics. In agentic systems, latency and cost compound quickly because one task may involve many model calls.

The next strong AI product will not be a wrapper around a model.

It will be a well-engineered system where the model is only one component.